How the Open-Source ImageBind Model Paves the Way for Future Generative AI Systems

Meta, formerly known as Facebook, has released a new open-source AI model called ImageBind. The model is a research project that combines six types of data into a single multidimensional index, or "embedding space," to create multisensory experiences. These types of data include text, audio, visual, thermal, depth, and movement readings. The ImageBind model is the first to combine all six types of data into one system, paving the way for future generative AI systems that can create immersive, multisensory experiences. This article will explore how the ImageBind model works and its potential applications.

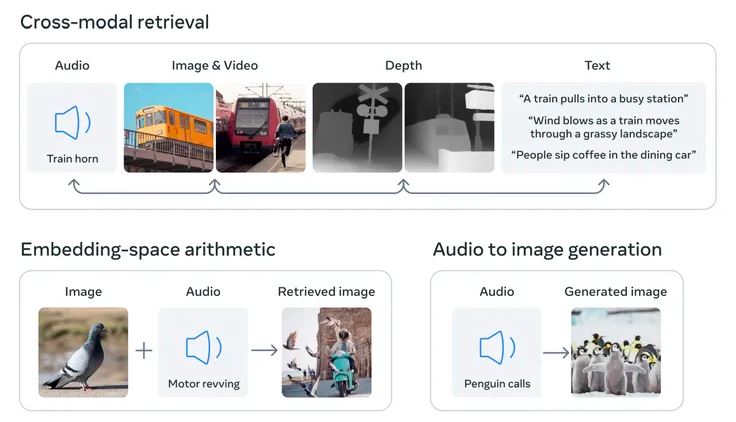

The core concept of the ImageBind research is linking together multiple types of data into a single embedding space. This idea has been fundamental to the recent boom in generative AI. For example, AI image generators like DALL-E, Stable Diffusion, and Midjourney all rely on systems that link together text and images during the training stage. They look for patterns in visual data while connecting that information to descriptions of the images. This is what enables these systems to generate pictures that follow users' text inputs.

The ImageBind model takes this concept further by incorporating six types of data: visual, thermal, text, audio, depth, and movement readings. The inclusion of movement readings generated by an inertial measuring unit (IMU) is particularly intriguing. IMUs are found in phones and smartwatches, where they're used for a range of tasks, from switching a phone from landscape to portrait to distinguishing between different types of physical activity.

The idea is that future AI systems will be able to cross-reference this data in the same way that current AI systems do for text inputs. Imagine a futuristic virtual reality device that not only generates audio and visual input but also your environment and movement on a physical stage. You might ask it to emulate a long sea voyage, and it would not only place you on a ship with the noise of the waves in the background but also the rocking of the deck under your feet and the cool breeze of the ocean air.

Meta has noted that other streams of sensory input could be added to future models, including touch, speech, smell, and brain fMRI signals. This research brings machines one step closer to humans' ability to learn simultaneously, holistically, and directly from many different forms of information.

The immediate applications of research like this will likely be much more limited. For example, last year, Meta demonstrated an AI model that generates short and blurred videos from text descriptions. Work like ImageBind shows how future versions of the system could incorporate other streams of data, generating audio to match the video output, for example.

The research is also interesting as Meta is open-sourcing the underlying model. Those opposed to open-sourcing, like OpenAI, say the practice is harmful to creators because rivals can copy their work and that it could be potentially dangerous, allowing malicious actors to take advantage of state-of-the-art AI models. Advocates respond that open-sourcing allows third parties to scrutinize the systems for faults and ameliorate some of their failings. It may even provide a commercial benefit, as it essentially allows companies to recruit third-party developers as unpaid workers to improve their work.